The Sony Research Award Program (Sony RAP) provides funding for cutting-edge academic research and helps build a collaborative relationship between faculty and Sony researchers. This research collaboration between Sony and the University of Wisconsin-Madison is led by Ryoji Ikegaya of Sony and Professor Mohit Gupta of the University of Wisconsin-Madison, under the Sony RAP.

Ryoji Ikegaya: Can you provide a brief description of your research collaboration project (Sony RAP 2019-2021 years), specifically speaking about differentiation from the state of the art and the potential use cases?

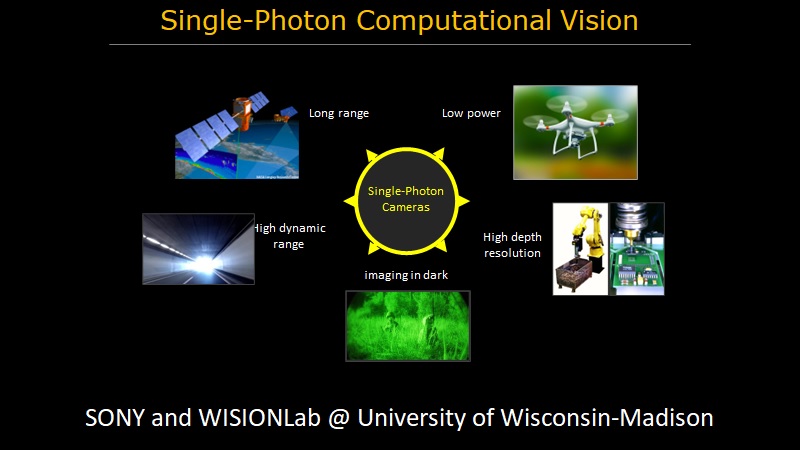

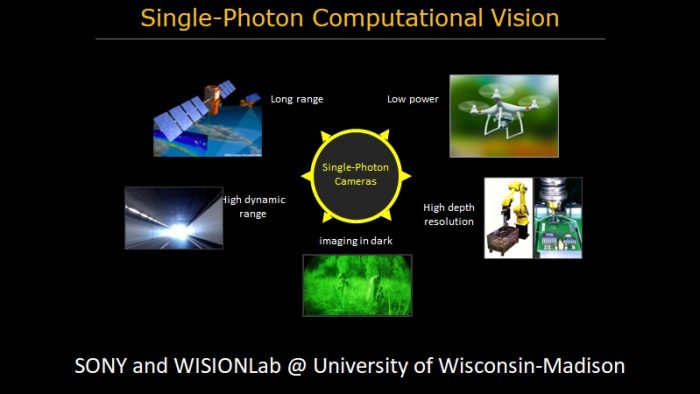

Mohit Gupta: We are designing computer vision and machine learning algorithms for single-photon cameras (SPCs). SPCs capture the visual world at its finest possible granularity – individual photons – opening up the possibility of achieving the highest-fidelity visual perception that physics allows. Our research is taking the first steps towards establishing a comprehensive imaging and computer vision stack around SPCs. If successful, this collaborative program will lead to a novel class of quanta (single-photon) vision algorithms with capabilities that cannot be achieved by conventional cameras. It would enable applications that range from taking high-quality photographs in extreme dark, resolving high-speed motion in scientific imaging (e.g., tracking high-speed cell deformation in ultra-low-light), to astronomy (e.g., telescopes searching for dim astronomical objects proximal to bright stars), to high-performance scientific imaging.

Ryoji Ikegaya: Could you please describe the impact of close collaboration with Sony scientists on your research and research direction?

Mohit Gupta: It has been incredibly rewarding to work closely with Sony researchers, including Kan-san, Ikegaya-san, Mitsunaga-san and Moriuichi-san. The discussions, encouragement and highly informative technical feedback from the Sony team has been instrumental in shaping and refining our research ideas. We hope to continue to work together with world-class Sony scientists to design the next generation of future cameras that see our world in a new light!

Ryoji Ikegaya: How would the technology developed from your research collaboration impact the future of image sensors? Could you describe the obstacles to get this technology to mass production?

Mohit Gupta: Single-photon cameras have the potential to revolutionize imaging and computer vision across a broad range of applications, including consumer photography, perception in extreme robotics scenarios (e.g., autonomous navigation, drones) as well as scientific imaging (microscopy and astronomy). However, due to the emerging nature of this technology, there are several challenges that we’d need to address before realizing widespread adoption. The raw photon stream data captured by SPCs does not resemble images captured by conventional cameras and does not always lend itself to conventional vision and ML algorithms. Furthermore, the amount of raw data captured by SPCs is orders of magnitude more than conventional images, making it challenging to operate under constrained bandwidth, power, computational and memory budgets. Finally, SPCs are a nascent technology, and will require fabrication processes to mature.

Fortunately, we believe that a close collaboration with Sony – one of the world leaders in image sensors – will go a long way toward addressing these challenges and maximizing the practical impact of this research. The technology that is developed as part of this collaborative research, along with the extensive expertise and experience of Sony, will transform SPCs into all-purpose sensors capable of imaging and inference across a broad range of photon-starved to photon-flooded environments, under high-speed motion, under constrained power and computational budgets. This research, aided by rapid ongoing advances in SPC technology, will create a strong practical impact across diverse domains. This includes single-photon 3D imaging systems capable of achieving “laser-scan quality” depth resolution at long distances in bright sunlight, fluorescence lifetime microscopy of live specimen, high-performance (low blur, super-resolution) photography and robot navigation in dark environments under high-speed motion, and high-level scene understanding on low-computational power devices. While steady engineering advances may lead to a few percentage points of improvement, achieving order-of-magnitude performance gains like these requires a qualitative shift, which will be driven by the new breed of single-photon computational imaging techniques developed as part of this collaboration.